What It Means to Bet on Intelligence

Markets are pricing transformation while thinking in continuity

Across markets, policy circles, and corporate strategy, a strange consensus has formed. It is rarely stated outright, but it is everywhere in behavior: that artificial general intelligence (and perhaps something beyond it) will arrive soon enough to justify restructuring the world in advance.

This is not an “AI boom” in the familiar sense. It is not a sector rotation or a productivity cycle. It is not merely a new software category bolted onto an old economy. It is a wager, coordinated and global and increasingly total, that intelligence itself is about to become cheap, abundant, and non-human.

Look at what we are doing. Look at the scale of capital expenditure. Look at the urgency of policy. Look at the concentration in markets. Look at the supply chains being rebuilt. Look at the energy grid build-outs being justified. These are not the behaviors of a system deploying “a helpful tool.” These are the behaviors of a system racing toward a new baseline.

Even if you personally dismiss the idea of AGI, the world is already allocating capital as if some version of it is close enough to matter. And whether you believe it or not, if your money is positioned around AI, that is the bet your money is making.

Most investment narratives are downstream narratives. They start with a product, a market, a set of competitors, and then project forward. They assume the world stays sufficiently similar that the question “who wins?” is the right question. But the AI wager is different. It is upstream. It is not about products or platforms. It is about the substrate: who or what does work, makes decisions, perceives information, sets goals, and executes tasks.

Markets are usually comfortable with change inside a stable container. This is the uncomfortable case: the container itself is changing.

Two Kinds of Bets

When people say “I’m all-in on AI,” they often mean one of two things. Either they think they’re betting on a productivity boom within a mostly familiar economy, or they are inadvertently betting on discontinuity without realizing it. The difference matters, because it determines what kind of risk they are actually exposed to.

There is psychological comfort in framing AI like prior tech waves. Television led to personal computers, which led to the internet, which led to smartphones. In that sequence, the shape of the world changes, but it changes in recognizable increments. You can tell a story. You can identify winners. You can model demand in units that make sense.

AI invites people to use that same template: “Sure, things will change, but it’ll be mostly the same companies with new tools, and we’ll all just do our jobs faster.” That framing keeps the whole thing emotionally manageable. But if you take the strongest version of the AI thesis seriously, even for a moment, you run into a harder implication. The next phase is not “today, but with more efficiency.” The next phase could be to computers what computers were to ledgers and typewriters. A shift so fundamental that the old categories stop being the right way to think about what’s happening.

A trend is incremental and reversible. A wager is total and path-dependent. If the world is betting on AI, it is not betting on chatbots. It is betting on a future where the cognitive premium collapses. Where thinking, perceiving, and deciding become abundant resources rather than human bottlenecks. That future does not have to be utopian or dystopian to be destabilizing. It only has to be unfamiliar. And unfamiliar is exactly what breaks the models investors rely on.

When Categories Dissolve

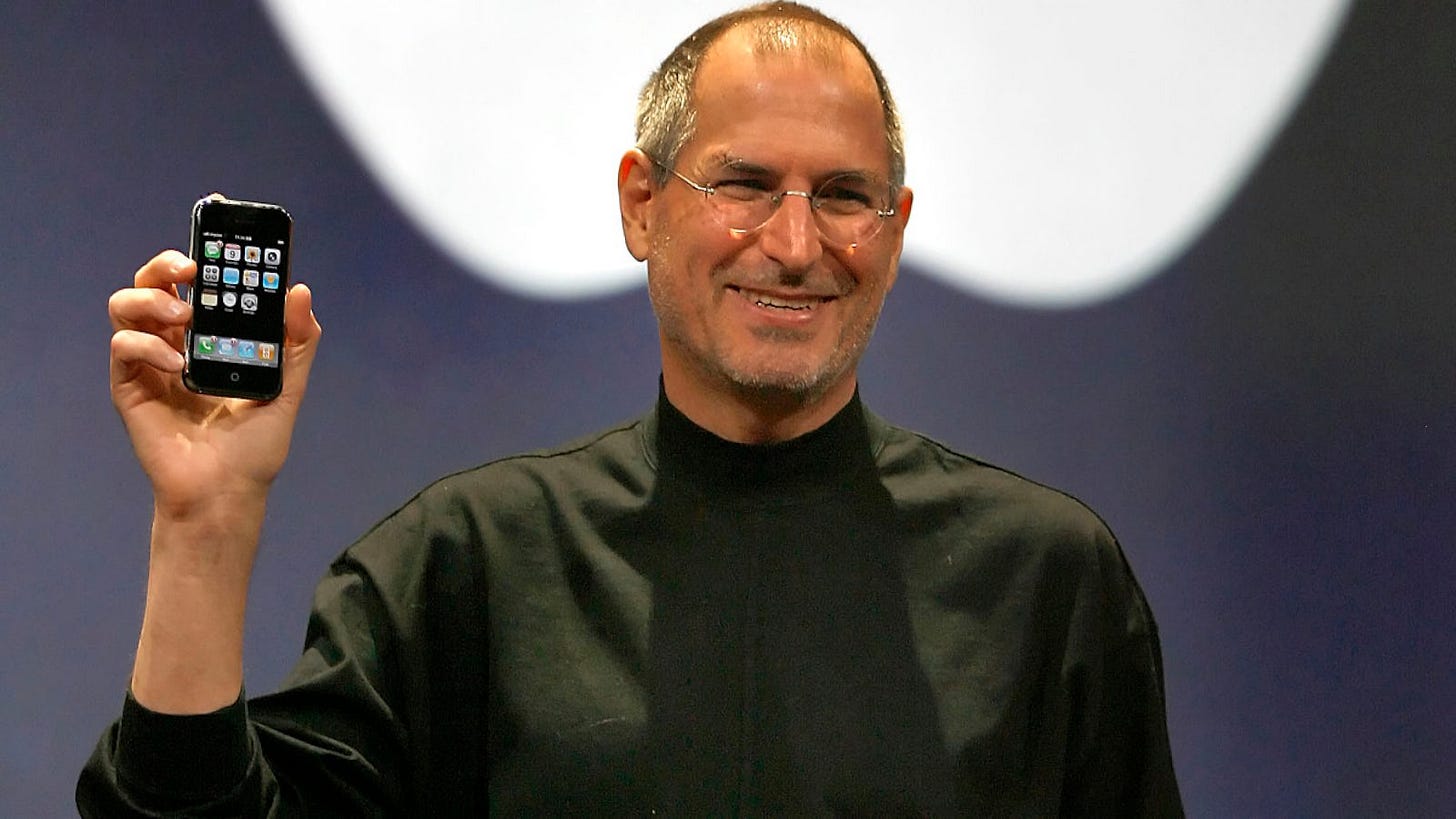

Consider Apple. Not as a critique, but as an example of how form follows constraint. The iPhone is one of the most successful products in history. The ecosystem is real. The distribution is real. The brand equity is real. The lock-in is real. And yet the smartphone itself is not a fundamental object of nature. It is not a permanent category.

It was a solution to a specific upstream constraint: humans needed mobile access to computation. Once that constraint existed, everything else followed downstream. The slab form factor, touch interfaces, app icons, notification economies, screens as the place where life happens. The phone was not destiny. It was an answer to a problem.

Which means if the problem changes, the answer changes too.

What happens to phones if intelligence stops requiring a screen to interact with? If intelligence becomes ambient, woven into environments, objects, interfaces that respond to voice, gesture, context? The smartphone’s central role as the portal to computation becomes less obvious. The interface metaphors we use (icons, apps, tapping, typing) begin to look like transitional scaffolding rather than permanent architecture.

Evaluating Apple primarily through “units sold” and “ecosystem penetration” starts to resemble evaluating Kodak’s distribution network in 2005. Technically accurate, but operating inside a frame that is already dissolving. The risk is not that incumbents collapse instantly. The risk is that they succeed under assumptions that no longer hold, until the world quietly moves to a different set of objects entirely.

And if the smartphone is transitional scaffolding, so is the quarterly earnings report. So is the discounted cash flow model. So is the fundamental assumption that categories remain legible long enough for analysis to matter.

The Interpretability Problem

Fundamentals rest on hidden assumptions of continuity. They assume the unit of value remains stable. That products, services, and categories persist in recognizable form. They assume the production function remains legible. That labor, capital, and technology combine in ways that can be measured and projected. They assume the distribution of agency remains familiar. That humans decide and systems execute. They assume the interface between intention and outcome stays consistent. That plans, budgets, and cycles operate on timescales that allow for correction.

AI, if it works in a deep way, pressures all of these at once.

Cash flows may still exist. Revenue may still grow. Balance sheets may still matter. But the interpretability of those numbers becomes shakier because the structure producing them is in flux. A discounted cash flow model requires a world that resembles its past closely enough that extrapolation is meaningful. It requires stable definitions of “work,” stable competitive dynamics, stable timescales for iteration and response.

The AI thesis implies a world where those definitions can shift. Where the pace of iteration accelerates beyond human-legible cycles. Where the bargaining power of labor changes because the nature of labor changes. Where the meaning of “competitive moat” becomes unstable because the constraints that created moats in the first place no longer bind in the same way.

You can still model companies in that world. But you are modeling them inside an assumption. The belief that continuity holds long enough for the model to matter.

If you own AI beneficiaries while dismissing the discontinuity risk, you are doing something incoherent: betting on transformation while thinking in terms of continuity.

This incoherence shows up most clearly when you think about what happens to the participants themselves.

The End of Human-Readable Finance

Finance today still operates on human-centric assumptions. Sentiment, fear and greed, narratives, behavioral cycles, the idea that markets move because humans make emotional mistakes that can be exploited. Even systematic strategies are often framed as arbitraging predictable human irrationality. But those assumptions rest on a specific structure: that humans are the primary agents making decisions, that their psychology is the explanatory layer.

Now imagine a shift in participation. Not humans picking stocks, but humans setting constraints and objectives. Risk tolerance, liquidity needs, time horizons, tax considerations, ethical preferences. Then delegating execution to systems that optimize continuously on their behalf. Not advisors researching positions, but advisors parameterizing AI agents. Orders routed algorithmically. Execution handled by bots trading against other bots.

The result is not “long-term investing” versus “short-term speculation.” It is continuous optimization. Portfolios that rebalance constantly, adjusting to microscopic edges, responding in timeframes that make quarterly earnings cycles feel glacial. Strategy that updates like software rather than like doctrine.

At that point, “investor psychology” stops being the explanatory layer. The participants have changed. Markets don’t disappear. Capital still allocates. Price discovery still happens. But the human intuition that once governed financial behavior becomes vestigial. Like knowing how to start a fire in a world with electricity. Fire still matters. It is still foundational to how the world works. But it is no longer a skill most people need to practice directly.

Finance could follow the same path: still central, still powerful, but no longer human-readable at the point of action. And this is not speculative futurism. This is precisely the kind of future implied by the strongest version of the AI thesis. The version that justifies the scale of capital currently being deployed. If you are positioned for AI, this is what you are exposed to, whether you have thought it through or not.

The Paradox No One Holds

Which leads to a paradox most people refuse to hold in mind simultaneously: they want AI to work, and they want the world to remain familiar. But if AI works meaningfully, the transition will not just improve the old system. It will recompose it.

The better the technology works, the faster legacy assumptions break. Business models that assume stable consumer interfaces. Labor markets that assume human scarcity. Valuation frameworks that assume stable categories. Financial narratives that assume human participation as the center of gravity. All of these become unstable not because they are wrong, but because the conditions that made them right are shifting.

A lot of AI enthusiasm is really optimism about smoothness. The hope for fast productivity gains, rising margins, better services, a handful of mega-winners that compound cleanly, markets that absorb the shift without disruption. But smoothness is not the default in regime transitions. In fact, the more profound the change, the less smooth the adjustment tends to be. Institutional lag. Stranded capital. Dislocated labor. Mispriced assets. Winners that seem obvious until the frame shifts and they aren’t anymore.

This is simply how systems behave when their underlying assumptions move faster than their narratives can update. The danger is not “everything crashes.” The danger is “many things stop making sense.” And stopping making sense is enough.

What You’re Actually Betting On

The greatest risk, then, is not refusing to bet on AI. The greatest risk is betting on AI while assuming the world that emerges will be the world you know how to navigate. Many investors have already made the wager, whether they admit it or not. Few have followed it to its logical conclusion. If intelligence is about to change form, if the human bottleneck dissolves and cognition becomes infrastructure, then products are provisional, frameworks are temporary, and the fundamentals you rely on may already be describing a world that is passing.

Markets are not pricing a tool. They are pricing a substrate. And substrate changes do not allow you to keep your old map.

The next time someone tells you AI will boost productivity by 20%, ask yourself what assumptions that number contains. Ask what it takes for granted about the structure of work, the definition of output, the stability of competitive dynamics, the continuity of categories. Ask whether those assumptions will still hold when the productivity gain arrives.

Because if the wager is right, if intelligence really does become the kind of commodity electricity became, then the world being measured and the world doing the measuring may not be the same world at all.

And if you think you’re betting on AI while preserving your role as an analyst, an allocator, a decision-maker with discretion and judgment, ask yourself this: whether “setting constraints for optimization” is a skill that remains scarce when the systems you’re constraining become better at setting their own constraints. Even the meta-layer may be temporary. Even knowing what questions to ask may become a shrinking domain.

The wager is not just about what AI does. It is about what remains for humans to do. And most people positioning for the former have not seriously considered the latter.