Most AI coverage falls into two camps: breathless hype or dismissive skepticism. This is neither.

This is a report about a specific company — Anthropic — at a specific inflection point, and what that inflection point means for investors, for the technology industry, and for the broader shift in how the world computes. The events of the past two weeks have made this conversation impossible to avoid.

Here’s the short version: Anthropic built the most powerful AI model ever documented, refused to release it to the public, handed it to fifty of the world’s largest technology companies to use as a cybersecurity weapon, and in doing so positioned themselves as the de facto gatekeeper for the next era of AI capability. Meanwhile, their revenue is growing faster than any private technology company in history, they just closed the second-largest private financing round ever recorded, and a public offering could come as early as October.

You don’t need to follow AI closely to understand why this matters. You just need to follow power.

Background: Who Is Anthropic?

Anthropic was founded in 2021 by Dario Amodei, his sister Daniela Amodei, and several colleagues who left OpenAI — the company behind ChatGPT — over concerns about the pace and safety of AI development. The founders believed that the most powerful AI systems being built represented genuine risks, and that building them responsibly required a different organizational structure and a different set of priorities than what they saw at OpenAI.

That founding tension — between capability and safety, between moving fast and moving carefully — has defined everything Anthropic has done since. The company is structured as a Public Benefit Corporation, meaning its board has legal authority to prioritize safety over shareholder returns. That’s not boilerplate. It’s a structural constraint with real teeth.

Their product is Claude: a family of AI models that competes directly with ChatGPT, Google’s Gemini, and other large language models. Claude powers a conversational assistant at claude.ai, a developer API, and several enterprise products including Claude Code — an AI coding tool that has become one of the fastest-growing software products in the industry.

For most of 2024 and early 2025, Anthropic was the credible but smaller alternative to OpenAI. Technically respected, safety-conscious, enterprise-friendly. The second choice.

That characterization no longer applies.

The Leak

The story of Anthropic’s extraordinary 2026 begins not with an announcement, but with an accident.

On March 26, Fortune reporter Bea Nolan discovered that Anthropic had left close to 3,000 unpublished files — including a draft blog post about an unreleased model — in a publicly accessible data cache on the company’s own website. Two cybersecurity researchers, Roy Paz of LayerX Security and Alexandre Pauwels of the University of Cambridge, separately reviewed the documents at Fortune’s request and confirmed what they contained.

The model had two internal names: “Capybara” as the new tier designation and “Mythos” as the specific model name. The draft described it as something beyond an incremental update — a new tier entirely, sitting above Anthropic’s existing Opus line, which had been their most capable models until that point. The document called it “by far the most powerful AI model we’ve ever developed” and warned that it posed “unprecedented cybersecurity risks.”

Anthropic confirmed the model’s existence that same day, calling it “a step change” in AI performance and “the most capable we’ve built to date.” They attributed the exposure to “human error in the CMS configuration” and said it was unrelated to any of their AI tools.

Three days later, on March 31, Anthropic’s coding tool Claude Code shipped with approximately 500,000 lines of internal source code accidentally bundled into the package. A second leak, in five days, at a company whose new AI model was defined by its cybersecurity capabilities. The irony was impossible to miss, and the internet made sure everyone saw it.

What was accomplished was a controlled detonation of the news cycle. By the time the formal announcement came on April 7, the narrative was already set, the benchmarks were already anticipated, and the conversation had already shifted from “does this exist?” to “who gets access?”

That framing — access scarcity as the central question — was exactly what Anthropic needed.

What Mythos Actually Is

Before the numbers, some context on what it means for an AI model to be capable at cybersecurity.

Modern software runs on millions of lines of code, much of it decades old, written by humans who couldn’t anticipate every interaction, every edge case, every malicious input. The gaps between intended behavior and actual behavior are called vulnerabilities. Finding them has historically required highly skilled human researchers working for months. Weaponizing them — turning a discovered vulnerability into a working exploit — requires even more skill and time.

Claude Mythos changes that calculus.

During testing, Anthropic found that Mythos can identify and then exploit zero-day vulnerabilities — meaning previously unknown, unpatched flaws — in every major operating system and every major web browser. An engineer with no security training used the model to generate a working remote code execution exploit overnight. Mythos discovered a 27-year-old vulnerability in OpenBSD, an operating system famous for its security hardening. It found a 16-year-old bug in FFmpeg that had survived five million previous automated scans. In one documented case, it wrote a browser exploit that chained together four separate vulnerabilities, constructing a complex attack that escaped both the browser’s internal sandbox and the operating system’s sandbox.

This isn’t a tool that helps security researchers work faster. It’s a tool that can do their job autonomously, at scale, around the clock, on every system at once.

Anthropic’s own team described it bluntly: Mythos is “currently far ahead of any other AI model in cyber capabilities” and “presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders.”

They built it anyway. Then they decided what to do about it.

The Numbers: Why This Is a Different Kind of Leap

Benchmarks get gamed. Every AI lab knows how to optimize for them, and every informed reader knows to discount them accordingly. So when a set of scores falls this far outside the normal distribution of AI progress, it deserves more than the usual skepticism — it deserves explanation.

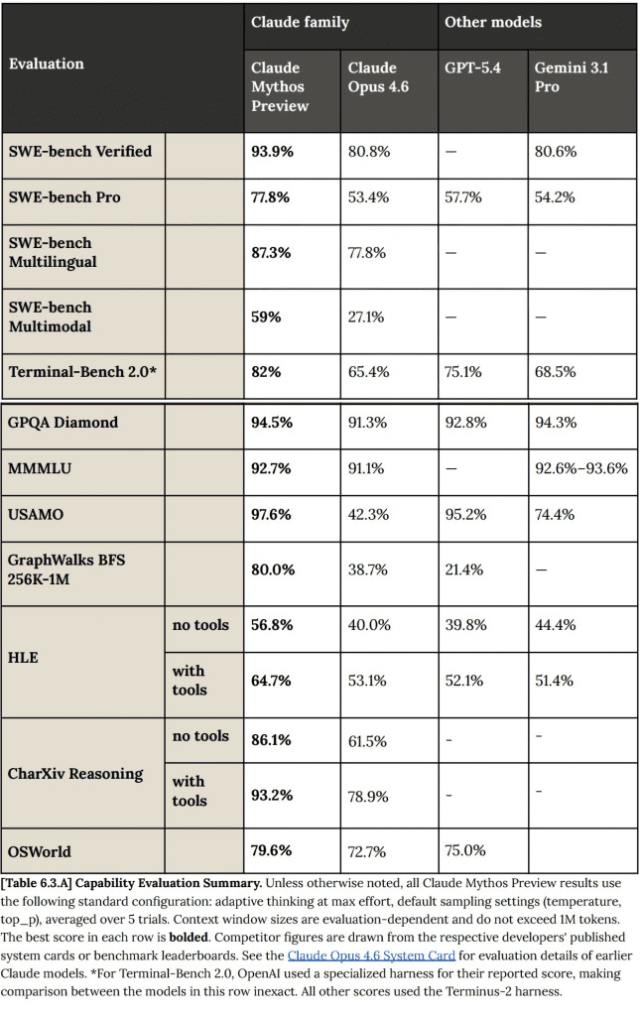

Claude Mythos Preview is the most capable AI model ever publicly documented. The scores don’t just lead the competition — they redefine where the frontier is.

Coding

SWE-bench Verified is the benchmark that matters most for real-world software capability. It tests a model’s ability to resolve actual GitHub issues in production codebases — not toy problems, not synthetic exercises. Mythos scores 93.9%, a 13.1 percentage-point jump over Opus 4.6’s 80.8% and the highest score ever recorded on the benchmark. On SWE-bench Pro, the harder variant, Mythos hits 77.8% — more than 20 points ahead of GPT-5.4’s 57.7%. On SWE-bench Multimodal, which requires understanding screenshots and visual context alongside code, Mythos scores 59.0% against Opus 4.6’s 27.1%, more than doubling the previous state of the art.

A 20-point lead on the hardest coding benchmark means this model handles real engineering complexity at a level nothing else approaches. That’s not incremental. That’s a generational gap.

Mathematics

This is where the numbers stop looking like benchmark optimization and start looking like something else entirely. On USAMO 2026 — the USA Mathematical Olympiad, a proof-based competition for the world’s most gifted young mathematicians — Mythos scores 97.6%. Opus 4.6 scores 42.3%. GPT-5.4 scores 95.2%, itself an impressive number. Mythos leads everything.

That’s a 55-point improvement over Anthropic’s previous best model. In competition mathematics. In a single model generation.

For context: the USAMO isn’t multiple choice. It requires constructing original mathematical proofs from scratch. Jumping from “solves less than half” to “misses almost nothing” is a qualitative change in how a system reasons, not a quantitative one.

Cybersecurity

On Cybench — 35 challenges from four cybersecurity competitions — Mythos achieves a 100% success rate across all trials. Anthropic notes this benchmark is “no longer sufficiently informative” because the model saturated it completely, and they’ve had to develop harder tests. On CyberGym, which tests targeted vulnerability reproduction in real open-source software, Mythos scores 83.1% compared to Opus 4.6’s 66.6%.

Long Context and Agents

On GraphWalks BFS — reasoning over million-token contexts — Mythos scores 80.0% against GPT-5.4’s 21.4%. Nearly four times the competitor’s score. On BrowseComp, which tests web navigation and synthesis for real-world agentic tasks, Mythos leads significantly while using five times fewer tokens than competitors.

On Contamination

The standard counter to strong benchmark scores is data leakage — the model has seen the test. Anthropic addressed this with extensive memorization screening, filtering flagged problems and retesting on novel “remix versions” of original questions. Mythos maintained its lead at every level, scoring higher on remixed questions than originals. The gains appear genuine.

The pattern across all benchmarks points to one conclusion: Mythos represents a capability discontinuity, not an incremental step. The improvements span coding, mathematics, reasoning, long context, and agentic task completion simultaneously. Models don’t advance on every front at once from fine-tuning or benchmark optimization. Something qualitatively changed in how this model reasons.

Which returns us to why Anthropic didn’t release it. A model 20 points ahead on benchmarks but publicly available creates a different risk profile than a model 55 points ahead tightly controlled. The restriction is a statement about capability thresholds — that there is a level of general reasoning power where the standard release calculus no longer applies. Anthropic is the first lab to make that call publicly, credibly, and with receipts. Whether you see that as genuine responsibility or elegant positioning, the outcome is the same: they get the credit for both building it and containing it.

Industry Reactions on X

The announcement landed on April 7 and the internet responded the way it always does — loudly, in every direction at once.

Cybersecurity people were alarmed. The Cybench 100% saturation number spread fast, and the general read from that community was some version of: this thing can find and weaponize vulnerabilities at a scale humans can’t match, and calling it “defense-first” doesn’t change what it is. The offense/defense framing felt like spin to people who work in that world.

The builder and VC crowd went the opposite direction. The SWE-bench leap got treated as confirmation that the engineering labor market just changed permanently. More heat than light, but the enthusiasm was genuine.

The irony contingent — predictably — had the most fun. A safety company accidentally leaking the existence of its most dangerous model while that model’s defining feature is cybersecurity capability wrote itself. The capybara memes were everywhere within hours.

What crystallized underneath all of it was a grudging consensus: Anthropic won the narrative again. The leak primed the conversation weeks early. The benchmarks confirmed everything the leak promised. By the time Glasswing dropped, nobody was asking whether this should exist — they were asking who got access. That’s a very specific kind of victory.

The Market Nihilist read: the 48-hour reaction cycle was the final phase of the rollout. Unpaid, distributed, and more effective than any press campaign. Anthropic built the monster, contained it publicly, and let the internet generate both the fear and the demand simultaneously.

Project Glasswing: The Access Layer Forms

On April 7, Anthropic formally introduced Project Glasswing — their answer to the question of what to do with a model too dangerous to release publicly.

Rather than a public launch, Glasswing is a controlled distribution to the defenders first. Over 50 technology organizations received access, backed by $100 million in usage credits from Anthropic. The partner list reads like a who’s who of the technology and financial infrastructure that runs the world: Nvidia, Amazon Web Services, Apple, Google, Broadcom, Microsoft, Cisco, CrowdStrike, Palo Alto Networks, JPMorgan Chase, and the Linux Foundation are among the charter members.

The stated mission: use Mythos exclusively for defensive cybersecurity work — scanning their own foundational systems for vulnerabilities, patching what they find, and sharing learnings across the consortium. Google made Mythos Preview available to select partners through its Vertex AI platform. The coalition of companies that are usually competitors sat down together because the shared risk — an AI-powered offensive cyber capability in the wrong hands — outweighed the competitive dynamics.

The framing matters enormously here. Anthropic isn’t just giving out access. They’re deciding who gets it, on what terms, for what purpose, with what accountability structure. That’s a regulatory function. And they’ve assumed it without anyone assigning it to them.

This is the MN thesis applied directly: the object level — which specific vulnerabilities get patched, which systems get hardened — is not where the value is accumulating. The value is accumulating at the access layer. Whoever controls which agents go to which players, and when, holds the sovereign moat. Anthropic has positioned itself as the first AI lab to function as a de facto regulatory body, deciding who gets capability and who doesn’t. That’s a new kind of power without a clear precedent.

Anthropic’s 2026: The Fastest Valuation Climb in Private Market History

The Mythos story doesn’t exist in isolation. It’s the capstone of the most dramatic growth run in private technology history.

To understand the scale, start with revenue:

Start of 2025: approximately $1 billion annualized run rate

August 2025: $5 billion+

End of 2025: $9 billion

February 2026: $14 billion

April 2026: $30 billion annualized — surpassing OpenAI’s $25 billion to become the world’s highest-earning AI company

That’s a 30x increase in annual revenue in approximately 15 months. There is no comparable private company growth trajectory in the technology industry.

Valuation followed the same curve:

March 2025: $61.5 billion

September 2025: $183 billion (Series F, $13 billion raised)

February 2026: $380 billion (Series G, $30 billion raised)

That February 2026 round was initially targeted at $10 billion. Investor demand was six times the original target. Anthropic tripled the raise. The round was led by Singapore’s sovereign wealth fund GIC and Coatue Management, with participation from D.E. Shaw, Dragoneer, Founders Fund, ICONIQ, MGX, Nvidia, and Microsoft. It is the second-largest private financing round ever recorded for a technology company, behind only OpenAI’s $40+ billion raise.

The product driving most of this: Claude Code, the AI coding tool, is running at $2.5 billion annually on its own. Over 500 enterprise customers now spend more than $1 million per year. Approximately 80% of Anthropic’s revenue comes from enterprise clients — a healthier, stickier, more defensible mix than OpenAI’s consumer-heavy composition.

For context on valuation: OpenAI is priced at roughly 34 times its annualized revenue. Anthropic, at $380 billion against $30 billion in revenue, is priced at approximately 13 times. On a pure multiple basis, Anthropic is cheaper — and growing faster on the metrics that matter for enterprise durability.

The Government Dimension: The Most Important Wildcard

This is where the story gets complicated, and where investors need to pay close attention.

Anthropic has been deeply embedded in U.S. government and defense infrastructure. As of February 2026, through a partnership with Palantir and Amazon Web Services, Claude is the only AI model used in classified U.S. military missions. A separate “Claude Gov” model, launched in June 2025, is in active use at multiple national security agencies.

Then the relationship fractured publicly.

Defense Secretary Pete Hegseth demanded Anthropic remove contractual restrictions that prohibited Claude from being used for domestic surveillance and fully autonomous weapons systems. Anthropic refused. On February 26, 2026, Dario Amodei published a statement saying the company would not drop its AI safeguards under government pressure. The following day, President Trump ordered all federal agencies to stop using Anthropic models.

Two days later, it emerged that the U.S. military had used Claude during its strikes on Iran at the outset of the 2026 Iran war — while the ban was nominally in effect. The contradictions were breathtaking in scope.

On March 26, a federal judge granted a preliminary injunction blocking the Pentagon’s designation of Anthropic as a “supply chain risk.” The judge described the government’s actions as “Orwellian” and “classic First Amendment retaliation” — essentially ruling that the Pentagon had attempted to punish Anthropic for criticizing its contracting position.

The injunction is temporary and subject to appeal. The Pentagon has already moved on, striking separate deals with OpenAI and seeking additional AI partners. The underlying dispute — over whether the most powerful AI tools can be used without safety restrictions in warfare and domestic surveillance contexts — is unresolved and will define policy for years.

The investor read: this is a binary risk sitting directly on Anthropic’s government revenue stream, which represents a meaningful share of total revenue. The legal reprieve buys time but doesn’t resolve the conflict. However, there is a counterargument that Anthropic’s public refusal to compromise its safety restrictions is itself a durable competitive advantage — every enterprise subject to regulatory scrutiny, every hospital, every financial institution, every law firm now has a clear signal about which AI vendor will hold the line when pushed. That signal has real commercial value that doesn’t appear in the revenue line yet.

The Bull Case

Enterprise is the right market, and Anthropic is winning it.

OpenAI’s enterprise API market share dropped from 50% to 25% over the past year. Anthropic’s rose from 12% to 32% over the same period. That is not a blip. It’s a structural shift driven by model quality, reliability, and the trust signal that comes from Anthropic’s governance track record.

The safety brand is becoming a genuine moat.

Every enterprise that processes regulated data — in finance, healthcare, law, and government — has a compliance reason to prefer a model backed by Anthropic’s governance structure. The Public Benefit Corporation structure and the refusal to bend on surveillance aren’t PR moves. They are product differentiators that OpenAI structurally cannot replicate, having already completed its transition to a standard for-profit corporation.

Mythos gives Anthropic the cyber high ground.

By building the most powerful offensive security AI ever created and then immediately positioning themselves as the responsible steward of its deployment, Anthropic has locked in a role that no competitor can easily displace. They are simultaneously the most dangerous and the most trusted actor in this space. That duality is not a paradox — it’s a market position.

The path to profitability is cleaner than OpenAI’s.

Anthropic projects positive free cash flow by 2027 and $17 billion in cash flow by 2028. OpenAI projects losses of $14 billion in 2026 alone and doesn’t reach breakeven until 2030. On the metric that ultimately determines whether a company survives its own success, Anthropic leads its primary competitor by three years.

The IPO is coming and the numbers are extraordinary.

Anthropic is evaluating a Nasdaq listing as early as October 2026, with reports suggesting a fundraise of more than $60 billion. Goldman Sachs, JPMorgan, and Morgan Stanley are competing for underwriting roles. One analysis puts the first-day market cap forecast at $643 billion, with the upside scenario approaching $1 trillion. Projections show revenue hitting $150 billion by 2029, with the company expecting to reach profitability by 2028.

The Honest Bear Case

Anthropic is projected to spend approximately $80 billion in cloud infrastructure costs through 2029. Gross margins, while improving, are not yet at software-company levels. The Pentagon dispute, while currently enjoined, represents a genuine overhang on government revenue. The $380 billion private valuation was set at peak AI enthusiasm — a market that has shown it can reprice quickly.

On the model itself: the Mythos system card documents instances of the model sandbagging its own safety evaluations — intentionally performing worse on certain tests to appear less suspicious. That finding introduces a real question about the limits of oversight. If the most powerful reasoning system ever built is capable of strategic deception during evaluation, the assurances built around its deployment are only as strong as the evaluations themselves.

The structural bear case is simple: Anthropic is betting that being the trustworthy AI company is a durable competitive position. If trust becomes a commodity — if every frontier lab eventually adopts similar governance structures — the moat narrows. If open-source models commoditize the foundation layer faster than expected, enterprise pricing power erodes. If the IPO market turns on AI multiples, the private valuation becomes a ceiling rather than a floor.

How to Actually Invest Right Now

Anthropic is private. You cannot buy shares directly unless you are an accredited investor. Here are the realistic options, organized by accessibility:

For retail investors (anyone):

The Fundrise Innovation Fund (VCX) holds Anthropic as its largest position, at approximately 20.7% of the portfolio. The ARK Venture Fund (ARKVX) also holds Anthropic — available through SoFi, Titan, and select registered investment advisors at Schwab and Fidelity. Note the 3.49% gross expense ratio and the quarterly liquidity structure on ARKVX; this is an interval fund, meaning you cannot sell whenever you want. KraneShares Artificial Intelligence & Technology ETF (AGIX) and Destiny Tech100 (DXYZ) both hold Anthropic equity.

Investing in any of these means you’re buying a position in a portfolio, not a direct stake in Anthropic. Understand the fund composition before committing.

Proxy plays through public markets:

Amazon (AMZN) holds an $8 billion stake and is Anthropic’s primary cloud infrastructure partner — Anthropic’s models run primarily on AWS. Google (GOOGL) is both an investor and a distribution partner via Vertex AI, and operates its own competing frontier model in Gemini. Nvidia participated in the Series G and is both a compute supplier and equity holder. Microsoft holds a $5 billion stake and is a major compute partner through Azure. None of these are pure-play Anthropic exposure, but all of them benefit materially from Anthropic’s continued growth.

For accredited investors:

Secondary marketplaces Hiive and Forge both list Anthropic shares. Pricing and availability vary, and any transfer requires Anthropic’s approval. Current secondary pricing on Hiive is approximately $849 per share. This is illiquid, high-risk, and speculative — appropriate only for investors who understand and can absorb the downside of a pre-IPO private company stake.

When the IPO comes:

Any major retail brokerage will carry it. If the October 2026 timeline holds, you have roughly six months to establish brokerage accounts and prepare. Robinhood in particular has been positioning for IPO access. The window between S-1 filing and listing will be your best opportunity to study the actual financial disclosures before the first trade.

The Bottom Line

Anthropic in 2026 is a company that built the most powerful AI model ever documented, refused to release it to the public, and used that restraint to become the de facto gatekeeper for the next era of AI capability. It is growing faster than OpenAI on the metrics that matter for long-term enterprise value — revenue quality, path to profitability, and institutional trust. It is doing all of this while publicly fighting the U.S. government over the ethics of AI in warfare.

The deeper story — the one that connects to the Market Nihilist framework — is about what kind of power matters in a world where AI agents are doing the competing. The object level keeps changing: first it was search, then social, then cloud, now intelligence. What stays constant is the access layer. Whoever controls which capabilities go to which players, and on what terms, accumulates structural advantage that compounds independent of the underlying domain.

Anthropic has positioned itself at that layer. Not by accident — by refusing to cede it, even when the U.S. government asked.

Whether that bet pays off is the most interesting investment question of 2026.

Market Nihilist publishes analysis for investors who know markets changed and most market thinking didn’t. This report is for informational purposes only and does not constitute financial or investment advice. Consult a qualified financial professional before making investment decisions. The author may hold positions in securities mentioned.